Deep Fakes: The end of face value

Artificial intelligence can now be used to create seamless fake footage of people from President Obama to major movie stars to…well, you

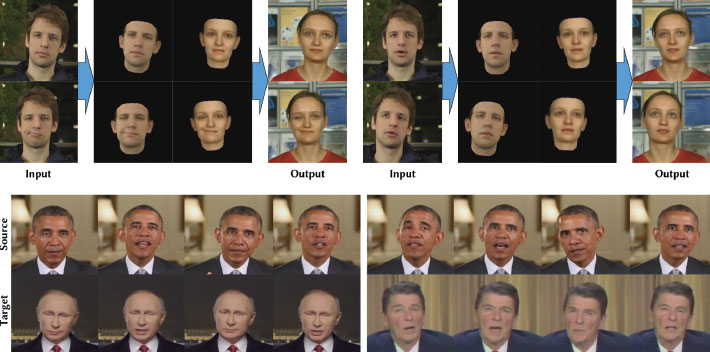

Two years ago, a professor at the Technical University of Munich published research showing how an ordinary, consumer-grade computer could process video in real time so that the face of someone in the video could be replaced with someone else’s, even matching facial expressions and mouth movements. (“Face2Face: Real-time Face Capture and Reenactment of RGB Videos”: https://youtu.be/ohmajJTcpNk).“These results are hard to distinguish from reality,” says Matthias Niessner, who runs the university’s Visual Computing Lab.

Of course, computer-generated content has been a staple of the movie industry for years. What’s different now is that it doesn’t take a Hollywood-sized budget and specialty computer equipment to do it. Now anyone can.

The technology rapidly evolved from an academic exercise to software that widely available to download and run on a home computer.

In 2013 a popular chocolate commercial featured a very convincing resurrection of Audrey Hepburn. But the ad required months of work by VFX house Framestore and millions of dollars to produce. With deep fakes technology, we’re talking about something that can be produced in an afternoon on no budget.

Fake Terminator

In December of 2017, a Reddit user going by the name ‘deepfakes’ became famous for software that allowed the faces of famous actresses to be swapped with those of porn stars in porn videos. Deepfakes made and published porn videos with Taylor Swift, Gal Gadot and Maisie Williams. All it took was a home computer, training data in the form of publicly-available videos and photos, and a machine learning algorithm.

Soon another Reddit user, deepfakeapp, released FakeApp, an easy-to-use application for those who didn’t have a background in machine learning. Fakeapp user tutorials are widely available on YouTube and the internet. Reddit has already suspended the deepfakes account, citing a violation of its content policy, specifically its rule against involuntary pornography.

But there are still deep fake pages up on Reddit, including videofakes and a DeepHomage page, with dozens of deep fake videos of the safe-for-work variety. They include some amusing and creative work – including the replacing of Zachary Quinto’s Mr. Spock with Leonard Nimoy’s, Lynda Carter’s Wonder Woman with Gal Gadot’s and Schwarzenegger’s Terminator with Dwayne Johnson. And inserting Nicolas Cage into deep fakes videos has become its own subgenre. Some of it is still rough around the edges but it’s improving all the time.

In April, actor/director Jordan Peele famously used FakeApp to put words in Barack Obama’s mouth in a video available through BuzzFeed. (“You Won’t Believe What Obama Says In This Video!”, BuzzFeedVideo: https://youtu.be/cQ54GDm1eL0)

“We’re entering an era in which our enemies can make it look like anyone is saying anything at any point in time,” Obama seems to say. “Even if they would never say those things. They could have me say things like, I don’t know, ‘Killmonger was right’ or ‘Ben Carson is in the sunken place.’”

The words, with lip sync automatically created by the AI, were spoken by Jordan Peele, who does a decent impression of the former President. The video itself is creepily realistic.

“This is a dangerous time,” Fake Obama says. “Moving forward, we need to be more vigilant with what we trust from the internet. This is a time when we need to rely on trusted news sources.”

Fake Politics

Deep fake videos aren’t necessarily the top concern when it comes to risks posed by artificial intelligence. Experts and regulators are worried about job losses, about military applications and cybersecurity, and about national competitiveness.

However, the topic of deep fake videos came up in a hearing in June in the US House of Representatives.

“AI techniques are increasingly able to generate convincing fake images and videos — including fakes of politicians, such as Vladimir Putin and President Trump,” OpenAI co-founder Greg Brockman told the House subcommitee on research and technology. The theme of the subcommittee was ‘Artificial Intelligence – With Great Power Comes Great Responsibility’.

“In 2014,” Brockman continues, “the best generated images were low-resolution images of fake people. By 2017, they were photorealistic faces that humans have trouble distinguishing from real ones.”

Earlier this year, the Max Planck Institute went further, when it published a paper, Deep Video Portraits. Its new AI improves on previous techniques and allows for photo-realistic re-animation of portrait videos using only an input video.

Fake-crime

In fact, fake celebrity porn and funny Nicolas Cage videos are just the start of what the technology can be used for. Experts are already worrying about the risks of child pornography, revenge porn, extortion and misinformation.

Videos could also be created with the goal of damaging corporate brands or political careers. Nation-states could use this technology to quickly and easy create videos designed to manipulate public opinion – both in their own countries, and elsewhere.

“If you could take different videos of people of stature, and convincingly change their messages around the world – what kind of chaos can it cause?” asked cybersecurity and counterintelligence expert Bob Anderson, a principal at The Chertoff Group, a global risk advisory firm.

It can be hard to prove that these kinds of videos are fake, he says. Sometimes, there’s embedded data or other forensic evidence that shows that the video has been altered, but that’s changing.

“It’s becoming increasingly harder for the forensic side to actually find those fingerprints,” he says.

And even if those videos are shown to be false, they can still cause a lot of damage. Given our perpetually plugged-in world, a video might go viral and create chaos, while experts are still doing due diligence in trying to determine its authenticity.

“These results are hard to distinguish from reality”

Fake war

“Countries like China and Russia, they want people to spend more time fighting amongst themselves,” Anderson says. “The worst thing that can happen – and that’s exactly what foreign nation states want to happen – is the breakdown of trust between the government and the news media.”

And it doesn’t help that trust in public institutions is near historic lows. According to the Pew Research Center, 77% of Americans trusted the people in Washington, DC always or most of the time in 1964. At the end of 2017, that number was down to just 18%.

According to Anderson, what’s needed is a co-ordinated international effort to agree to norms of behaviour when it comes to this kind of technology.

“But, right now, Russia, China, Iran and North Korea would never sign onto that,” he says.

In fact, he’s seen no signs that international pressure is having any kind of effect. “I’ve been involved with the arrests of hundreds of individuals from China, Russia, Iran and North Korea,” he says. “And I’ve got to tell you, I don’t think it’s slowed them down one iota.”

Deep fakes technology is going to have all kinds of fascinating creative applications. Onscreen performances – from the past and present – are going to become infinitely malleable. And flawless, realistic dubbing in foreign languages will become simple and cheap.

But there’s no doubt we are entering a world where literally nothing we see or hear on our screens can be taken at face value. How do we negotiate this baffling onslaught of digital dopplegangers? Jordan Peel’s Fake Obama urges vigilance in his closing line: “Stay woke, bitches.”

]]>