Adobe puts mobile centre stage at MAX

At its Adobe MAX event, the creative software giant looked to a mobile-first, influencer-driven future

The annual celebration of all things Adobe gathered creatives in Los Angeles, in early November 2019. There are usually a few famous faces at Adobe MAX, but this year featured a busload of them, including Dave Grohl, M. Night Shyamalan, photographer David LaChapelle and artist Shantell Martin. All gave insights into their creative processes, with teen singer/songwriter Billie Eilish and designer Takashi Murakami interviewed about the future of mobile creativity.

New products were rolled out across the board for design, video and immersive content creation. But it was mobile creativity that really stood out at MAX 2019.

Going mobile

After declaring at Adobe MAX a year previously that Photoshop would come to iPad, Adobe has finally made good on the promise. In the meantime, Adobe’s competitors have continued to roll out tablet versions of their own desktop image-editing tools. There are now many fans of Procreate, Pixelmator and Affinity Photo for iPad. Apps such as Astropad or Duet, which allow you to use an iPad and Apple Pencil to draw directly into the desktop versions of creative tools, are also available. But Photoshop is still an iconic product and the new iPad version has been eagerly, breathlessly awaited.Adobe is applying deep learning across the boardReleased at MAX 2019, this version of Photoshop on the iPad is aimed primarily at masking, retouching and compositing. It also offers a ‘subset’ of tools and layers (with options for both) that closely resemble the desktop version, with the added versatility of touchscreen and support for Apple Pencil. Gestures enable users to zoom and pan around an image and allows you to work with full-size PSDs with layers intact. It works smoothly with the familiar Adobe interface – albeit a smaller one (buy the biggest iPad Pro you can afford as the icons can be a little small).

Mobile PS Version 1

However, the reception has been mixed – the initial response on the App Store was poor, excruciatingly so. Many reviewers seem to be outraged by what they see as an underwhelming product, especially after waiting a year for its release. Photoshop on the iPad does have its fans, including those who are not just ‘mobile-first’ but ‘mobile-only’, or find the full-blown Photoshop too complicated. Adobe emphasised that this was a v1 and that users should expect more in the future. The reactions are similar to the response the release of Apple’s Final Cut Pro received, when the company ‘reimagined’ its stalwart NLE to industry-wide howls of anguish. It remains to be seen if Adobe will continue to target a new, mobile-first market (very probably) or play to the old guard with the next update.

Fresco app

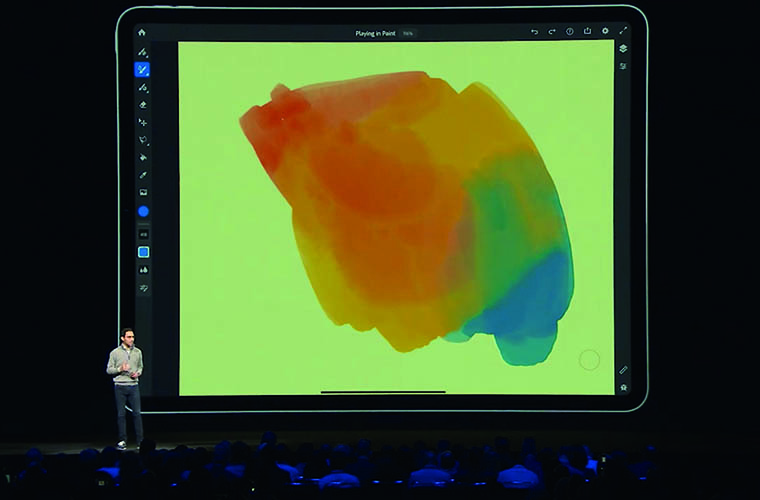

Another highlight at MAX was Fresco, a digital natural painting app offering a mix of vector and raster tools for the iPad Pro, and now Windows Surface. It offers Live Brushes to mimic the look of painting on a real-world canvas by mixing and interacting with colour on the tablet screen. The company also previewed Illustrator on the iPad, another reimagined touch-based app that promises to bring the ‘precision and versatility of the desktop experience to the tablet’. Adobe Aero was probably the most intriguing new app introduced at MAX. Aero allows users to author and manipulate AR content on the same device used to view it, such as the iPad Pro. The AR assets might be created in applications like After Effects or downloaded from stock libraries, but they can be manipulated for AR scenes using normal touchscreen gestures, including applying animation. Aero adds interaction through a Behaviour Builder and also lets you export or share the AR content directly from the mobile app. Expect great things, or at least a lot more AR in your social media feed.PS Camera

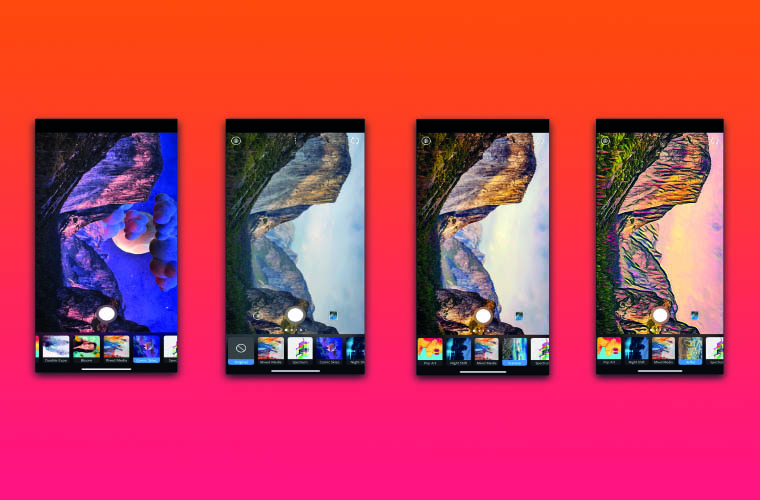

Last – but certainly not least in terms of publicity – was Photoshop Camera, a new app that ‘brings Photoshop magic to your mobile’. Though not shipping until 2020, it will use AI to gauge the scene in front of the lens, or automatically optimise images from the Camera Roll, as well as offering some manual colour correction tools. Motion and still graphics templates – known as lenses – can be created in Photoshop and the results comped smartly by AI in Photoshop Camera. It doesn’t support RAW, but the app doesn’t appear to be aimed at the pro photography crowd who would want such a facility. Those same users might also question whether the app really deserves the iconic Photoshop branding. When it ships, Photoshop Camera will include a library of lenses and effects from artists and influencers, including Billie Eilish. Introducing the work of these artists and of early adopters of Photoshop Camera, Adobe CTO Abhay Parasnis said, “When you see these, it’s clear that capture is truly the new creative”.Smart thinking

But do these apps really represent the next content creation revolution? Perhaps, but not in the way you’d think. There’s definitely a prevailing theme of appealing to Gen Z – the latest release of cross platform/device video editing app Premiere Rush added export to TikTok to its roster of social media destinations. However, digging deeper behind the headline products and celebrity/influencer conversations reveals a few key indicators of Adobe’s roadmap for the future. With deep learning technology Adobe Sensei, Adobe has grabbed the huge potential in AI with both hands. Creative Cloud features that already use Adobe Sensei include Auto Reframe in Adobe Premiere, Adobe Stock’s Visual Search, and Match Font and Face-Aware Liquify in Photoshop. And it is what makes Photoshop Camera more than an Instagram filter clone.Adobe Sensei

Sensei isn’t just employed in Adobe’s creative tools – it provides insights for Adobe Experience Cloud, a collection of integrated online marketing and web analytics products, where it is deployed to create personalised experiences. It also powers features in Adobe Document Cloud, such as automatic document scanning with mobiles and streamlining form-based processes.Another collaboration tool is a forthcoming live-streaming feature for Adobe appsAI on phones isn’t new; my year-old Huawei phone has AI built into the camera to recognise scenes such as ‘stage performance’ or objects such as a dog (sometimes it’s actually my cat, but never mind). It scans documents smartly, too. But Adobe is applying deep learning across the board. In an example at MAX, Adobe CTO Abhay Parasnis described how an artist could paint on video footage to change the style of a single video frame and then use Sensei to automatically apply the effect to the whole sequence. ImageTango, yet another Sneak Peek, showed how Sensei can mix a detailed shape of one sketched image and the intricate textures of a stock image, to create a combination of both as a new image. When Sensei is casually deployed to fix photos in a consumer-focussed app like Photoshop Camera, it’s plain to see that AI represents the next revolution for content creation, not just in what it’s capable of doing, but also what it allows artists to ignore or offload, while they explore even greater creativity.

Playing together

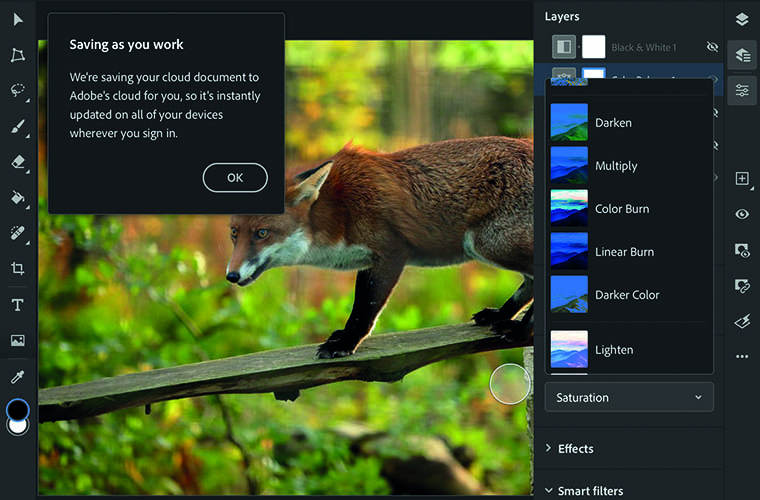

Collaboration is also seen by many as a key driver for creatives, and Adobe has embraced this with the MAX announcement of cloud documents, cloud-native files that you can open and edit in compatible apps. Not just between people but platforms, too. If you use Adobe Fresco on Surface or the iPad, for example, cloud documents will let you seamlessly transfer your work to either device. Illustrator for the iPad will do the same when it ships. Adobe claims your work is always updated, across iOS, Mac and Windows devices. When I used the trial versions of Photoshop for iPad and Photoshop 2020 on my Mac, I found it’s a case of going to Cloud Documents on each device to retrieve it. That in itself works fine, and certainly is useful for portable workflows, but it doesn’t feel exactly like the seamless roundtripping workflow that Premiere Pro and After Effects users enjoy. Another collaboration tool is a forthcoming livestreaming feature for Adobe apps. This will allow users to stream work online from within applications and share links with their followers to watch and comment live. Currently being trialled with some users of Adobe Fresco, the Twitch-style move has potential to be of great benefit to educators as well as designer/client review sessions.Dump the desktop?

So, are creatives likely to move en masse to mobile, and mothball their Macs? That could well be a generational thing – 17-year-old Billie Eilish discussed during her keynote how she edited together her stage show visuals using her iPhone, which led to her collaboration on a music video with Takashi Murakami. But it wouldn’t appear to be the direction of travel for everyone. Eagerly awaited: It was announced at 2018’s event – and now Photoshop for iPad is here

ISO is a Glasgow-based digital media and software studio, designing, directing and building large-scale interactive and immersive media projects as well as TV titles and motion graphics. As such it’s a good place to gauge the real-world impact of mobile content creation tools.

“Many of these [MAX announced-apps] are things on our radar to check out,” says designer Mark Breslin, one of ISO’s directors. “They seem very focussed on ‘mobile creativity’ – very much geared at the Instagram/Snapchat/Tik Tok culture. I think these might find an audience in content creators, influencers, the general public and so on, rather than designers as such, where I think the default is still working at a large screen or MacBook. I don’t see that much benefit with these apps if your usual workflow is a jump between a desktop and MacBook Pro.

Eagerly awaited: It was announced at 2018’s event – and now Photoshop for iPad is here

ISO is a Glasgow-based digital media and software studio, designing, directing and building large-scale interactive and immersive media projects as well as TV titles and motion graphics. As such it’s a good place to gauge the real-world impact of mobile content creation tools.

“Many of these [MAX announced-apps] are things on our radar to check out,” says designer Mark Breslin, one of ISO’s directors. “They seem very focussed on ‘mobile creativity’ – very much geared at the Instagram/Snapchat/Tik Tok culture. I think these might find an audience in content creators, influencers, the general public and so on, rather than designers as such, where I think the default is still working at a large screen or MacBook. I don’t see that much benefit with these apps if your usual workflow is a jump between a desktop and MacBook Pro.