Haptics: Feeling touchy

Posted on Mar 5, 2021 by Neal Romanek

In a time when people are longing for the human touch, haptics is holding out a hand

It’s common practice to introduce every article on the future of haptics with a reference to Aldous Huxley’s Brave New World and the popular entertainment depicted in it called the “feelies”. So now that we’ve done that, it’s worth noting that the future of haptics may actually be even more outlandish. Huxley’s feelies featured people congregating in specially dedicated theatres to experience stories where they could feel every sensation the characters felt, but global connectivity and a growing sophistication in haptics technologies may mean that in the future you’ll be able to feel your content, any place at any time – or your friends or associates, no matter where they are.

Haptics – an umbrella term describing technology which uses the senses of touch and motion to simulate real physical interaction with a constructed environment – has actually been with us for a while. I remember oohing and aahing at haptic computer mice 20 years ago, which enabled you to feel your browsing experience, with little actuators indicating the borders of web pages with a subtle bump or feeling a slight but appropriate resistance when you used the scroll bar. As early as 2001, papers were being written on how force feedback haptics in computer peripherals might physically impact their users. Would haptic mice be an accelerant for RSI? (see Haptic Force-Feedback Devices for the Office Computer: Performance and Musculoskeletal Loading Issues by Jack Tigh Dennerlein and Maria C Yang).

The Logitech iFeel mouse, launched in 2001, was a three-button scroll optical mouse with actuators powered by USB, which translated mouse movement into haptic sensations. The mouse was compatible with Internet Explorer and Netscape browsers. The technology inside the iFeel mouse was called TouchSense and was developed by one of the pioneering companies in the field, Immersion.

“Immersion was founded with the idea that haptics was missing from digital experiences,” says the company’s vice-president of products and marketing, John Griffin. “Touch is your largest sensory organ, so why wouldn’t game designers and content developers bring that sense of touch to the experiences they’re creating?”

Ubiquitous video connectivity has helped people combat the isolation of this year’s stay-at-home and social distancing orders, but while these technologies have been transformative, the human touch is still absent. Could the old AT&T slogan “reach out and touch someone” finally become a reality?

“Until recently, the experience you could produce with haptics was limited – think of a cellphone or a pager buzz,” explains Griffin. “What’s changed in the last couple years is the cost and complexity of implementing haptics has come down. The actuators – the motors – have become cheaper, and the level of sophistication and performance has improved dramatically. Now you can get very nuanced, high-fidelity effects from these devices.”

Tools and techniques for developing haptic experience have become more accessible, too. “Haptic designers can create haptic effects like an audio sound designer would create sound or graphic artist would create graphic effects. It’s not quite at that level of maturity, but it’s heading in that direction,” he adds.

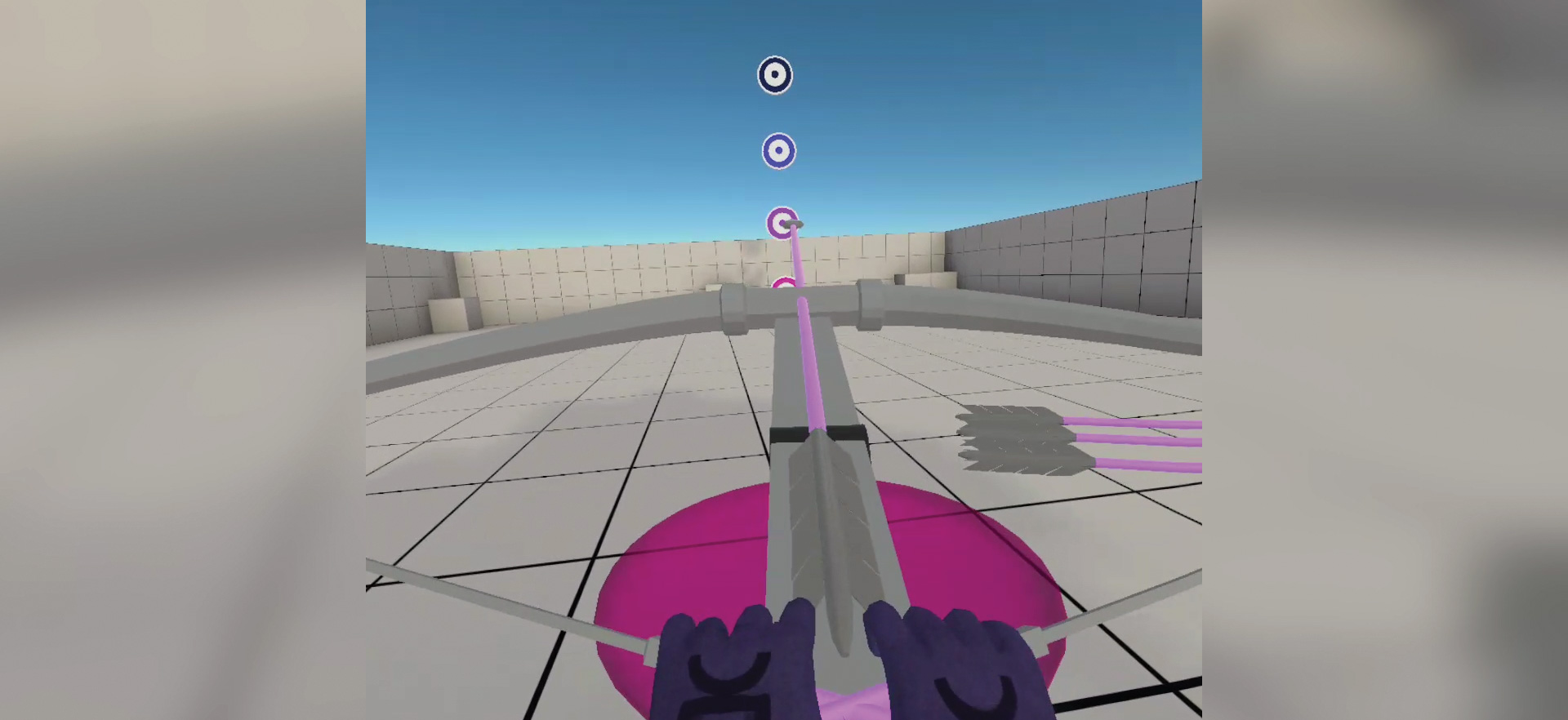

Making for the people

Sony’s new PlayStation 5 gaming console is creating a lot of buzz (!) for its sophisticated haptics system. The console’s dual controllers use new high-fidelity haptics for producing wide-bandwidth effects and textures. The controllers also feature force feedback on the triggers, which can mimic resistance a game character might encounter when manipulating objects, providing information about movement, weight and textures. The variable feedback can also simulate a character’s internal state – some games make the triggers harder to press when the character is tired or injured.

In the past, haptics development was very siloed, with developers and manufacturers delivering solutions almost on a product-by-product basis with no wider haptics ecosystem for development and collaboration to take place in. This made it harder for the technology to spread widely and for developers to work experimentally or creatively with haptics. But companies working with haptics are beginning to come together to establish industry-wide standards and best practices.

“We’ve led an effort to promote haptics standards in the Moving Picture Experts Group (MPEG) and put in proposals to make haptics a first-order media type. That would mean a haptic track could live alongside audio and video content in an MPEG container,” explains Griffin.

The bandwidth required for haptics data is fairly limited, nothing like the size of video or audio. Transmitting haptic data to a user’s haptic device yields a pretty big return in terms of the small amount of data required to produce an effect on the audience. Even complex haptic experiences may not be that demanding to livestream.

“In lockdown, the idea of using technology to facilitate touch has become a bigger thing – in everything from day-to-day enterprise communications to sex tech. Touch is such an integral part of who we are and what we do, from shaking hands to giving someone a hug or tap on the shoulder. So what are ways that existing products can bring users closer together? And how could the sense of touch be deployed in news ways?,” asks Griffin.

Finding a language of touch

Creating haptic experiences on a large scale requires a common language. Engagement with standards bodies – like Immersion’s proposals to MPEG – is one necessary step. Another is developing tools that will allow new entrants to create their own haptic experiences without needing a huge tech company backing them.

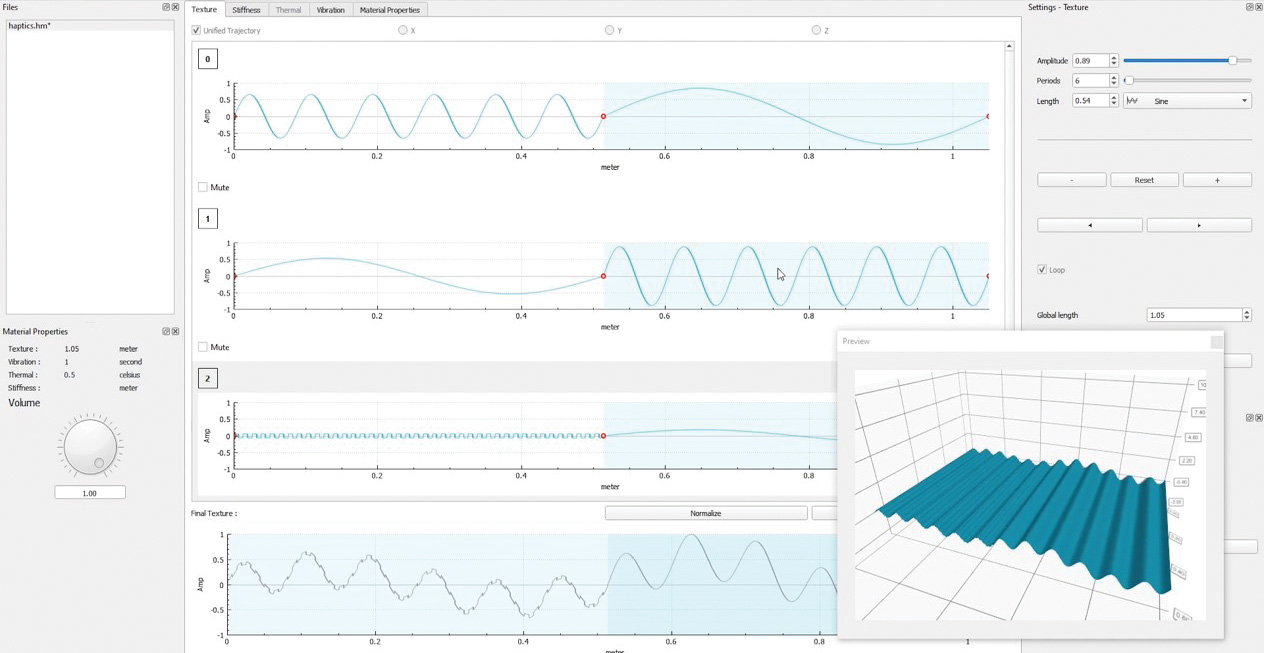

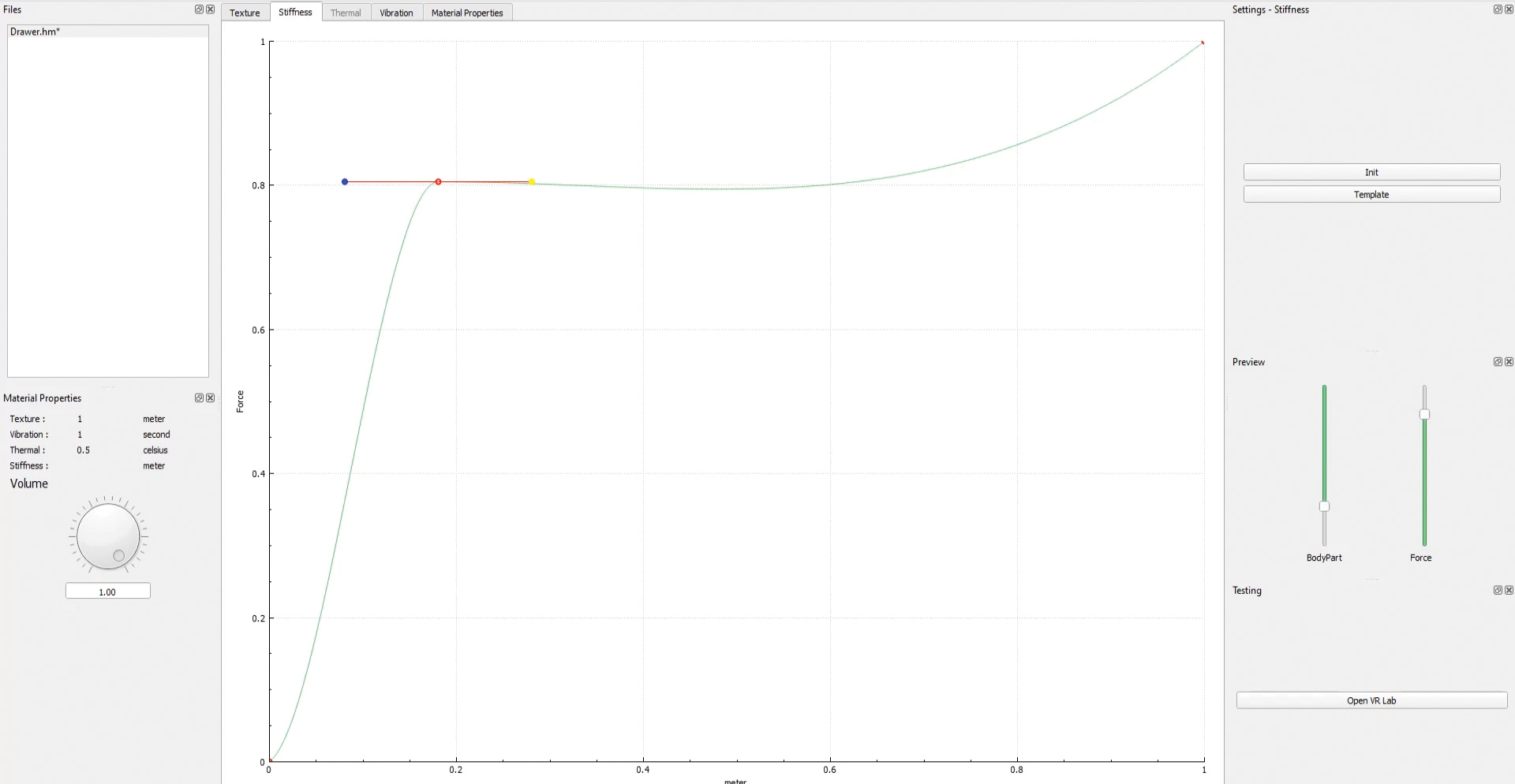

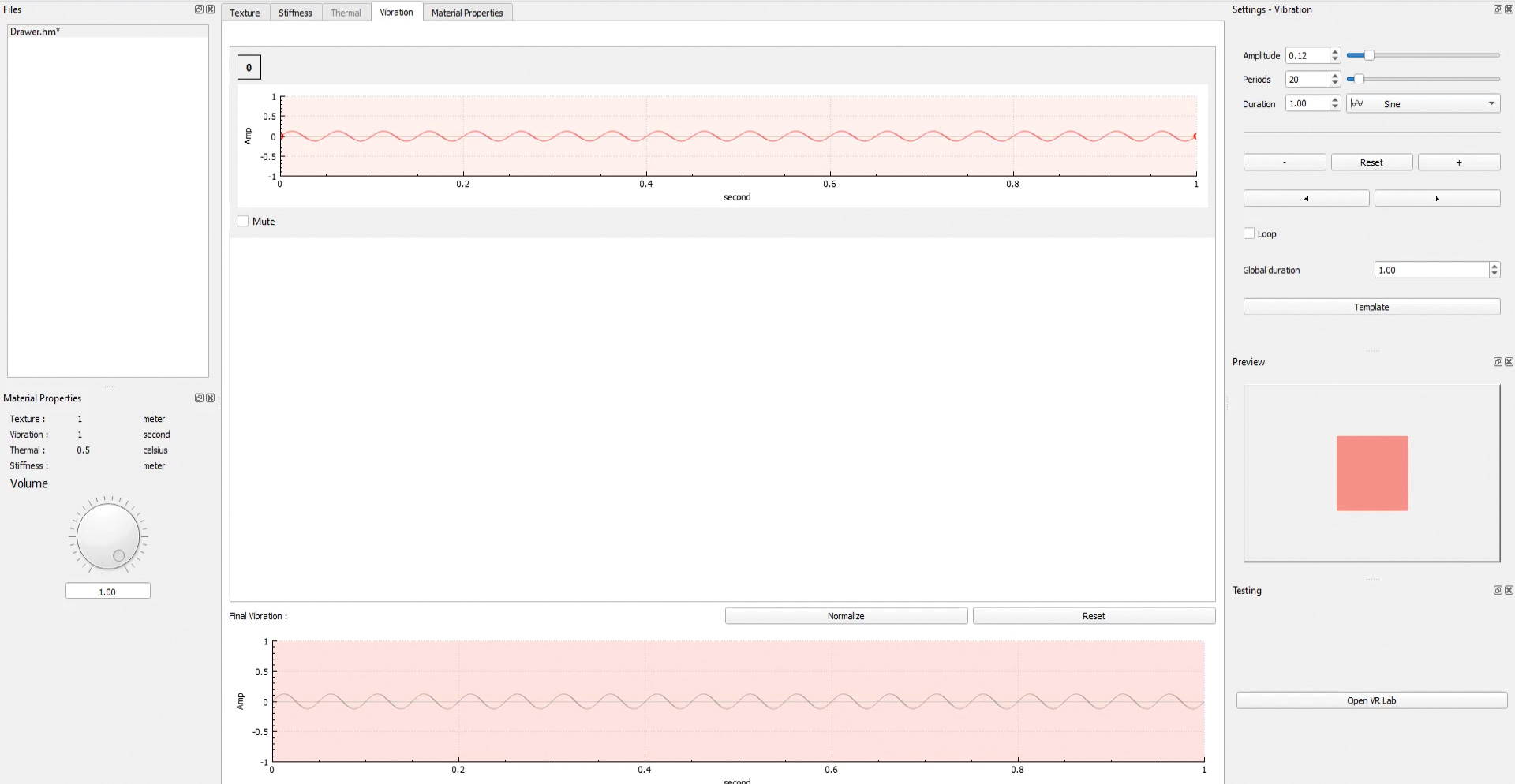

French tech company Interhaptics has launched Haptic Composer, which is an application for simplifying and democratising haptics design, and aims to be for haptics what Adobe Photoshop is for image editing. Another software, Interaction Builder, is a low code 3D engine plug-in for developing realistic hand interactions for virtual environments.

“Interhaptics is a design system for digital touch and software that allows you to render digital touch for any kind of haptics device in the world,” explains the head of the company, Eric Vezzoli.

“The problem we’re solving is that the haptics market is fragmented. You have several different technologies, each with their own way of doing the design and rendering. The way you code haptics for a phone is different from how you code for a controller, which is different from code for an exoskeleton. The market doesn’t develop, because it’s too much of an investment to create a piece of software that isn’t multiplatform.”

Interhaptics is also working with standards bodies, including the Haptics Industry Forum, to try to define common practices for haptics content, but Vezzoli believes it will be years before they finally come to any solid agreements on interoperability. “We have started defining the value chain in haptics – who does what and how – but it will take few years to establish the standards and execute them and make them useful. The technology needs to be there first, so we’re offering this opportunity to device manufacturers and developers to create haptic-enabled content that works on multiple platforms.”

The Interhaptics project came out of academia and an attempt to build a language for haptics from scratch. Treading the same paths as audio and video technologists in past generations, they tried to define the basic human perceptions around touch.

“For video, they started with three colours and with a single pixel that can represent any one of those colours. For touch, we asked: what are the important haptic sensations that need to be encoded for multiple haptics devices to work? It is not a settled argument, but it more or less came down to four different perceptions – force, textures, vibrations and temperature,” explains Vezzoli.

Using these four parameters, Interhaptics software simplifies the design process for haptics and allows simple export of haptic tracks for each parameter, which can be embedded and triggered as necessary, defined by location (eg texture) or time (eg vibration) and, importantly, can be easily tweaked and edited.

The haptics market is super fragmented. You have several different technologies, each with their own way of doing the design and rendering

The human-machine-human interface

Densitron has been in the media tech business for almost 40 years, primarily as a developer of display technology, producing early LED displays and mobile devices. But as its business has grown, so has its notion of what ‘display’ really means. Densitron is working to layer new technologies on to its display systems, including a full gamut of HMI – that’s human-machine interface – technologies.

“We identified that HMI is going through kind of a rebirth,” says Matej Gutman, Densitron’s technical director for embedded tech. “A display used to be a simple device that would show you pieces of information. Displays then evolved into touch surfaces, then with mobile, you have tactile feedback now. There’s an evolution going on. Displays are becoming more and more intelligent and more things are being packed inside the word ‘display’.”

Gutman points out that human-machine interfaces have been a reality for many years – since a human first used a rock as a hammer, one could argue – but what is newer, and more interesting in today’s world, are human-machine-human interfaces.

Densitron is developing some new technologies, to be announced next year, which aim to make haptic experiences on screens more lifelike and easily deployed across multiple devices. Again, ease of deployability seems to be key to a future where haptics technologies flourish.

“We have seen our competitors try to enter the market with a really nice technology, but the integration into an OM product would be hard. They offered a product, but you couldn’t really slot it into any other applications. They offered the finished thing and it was kind of an enclosed black box. There’s going to be a major shift in the standardisation,” says Gutman.

There’s an evolution going on. Displays are becoming more and more intelligent

The ballooning demand for presence at a distance – driven now by coronavirus, but also necessary in a low-carbon world where flying will be strictly limited – is going to become a big market, Gutman believes. And there are already fascinating technologies being trialled. “I’ve seen wonderful products that are based on touch – ones that can simulate difference surfaces. Let’s say you are a manufacturer of cloth – you can upload micro structures, and the person on the other side, when they touch the surface, can actually feel the fabric. There’s definitely going to be a physical interaction that we will need to take into account,” he concludes.

“We are mastering video communication. In terms of touch and tactile feedback, we’re lagging behind. But I’m sure there’s going to be a genius new way of doing that. There’s been lots of lone wolf development going on for the past decade, but our current situation is definitely going to put haptics in the spotlight. In five or ten years, some crazy projects will come to life, I can guarantee you that.”

This article first featured in the Winter 2020/21 issue of FEED magazine.