From football to forests, remote production is here

Posted on Mar 10, 2021 by Adrian Pennington

Live production is not only going remote, but automated too. Balancing out the challenge of enforced Covid sheltering is a boom in AI-boosted production tech

Streaming Scottish Championship football

With no gate and no concession revenues, sports production has no choice but to adopt new technologies to be more efficient, cheaper and flexible. If sport organisations can’t engage fans and monetise the result, it could be game over.

One cost-effective and Covid-safe solution is being provided by automated production technology. Every Scottish Championship football club has had a remote production system installed for the 2020/21 season, with the goal of enabling overseas supporters to watch their teams live over a new streaming platform launched by the SPFL (Scottish Professional Football League). Clubs can also use the platform for broadcasting matches domestically when stadium attendance restrictions are in place. The service is run by OTT specialist StreamAMG as part of a five-year deal with the league.

Covid accelerated the automated content creation evolution

The system is based on Pixellot’s automated camera system, which costs as little as $40 to $100 per game to use. Its fixed, unmanned cameras have built-in ball-tracking technology to produce live HD footage at 50-60fps, which is then broadcast via a centrally operated streaming platform.

“Pixelott’s camera is located in the middle of a stand overlooking the field of play,” explains Pixellot’s director of marketing, Yossi Tarablus. The system delivers a four-camera view, which is stitched into a panorama to resemble TV coverage.

“Using machine learning, based on a football-specific algorithm, and computer vision, we simulate camera operation and vision mixing to follow the flow of play – and we have graphics and can replay multiple-angle shots. We can also manipulate the image for things like light optimisation – brightening shadowy areas of the pitch, for example,” he adds.

StreamAMG then transcodes the live feed and delivers it to the front-end match centre, also built and hosted by StreamAMG.

“Our fixture and event management system delivers real-time JSON Feeds of fixture data, geo holdback data and custom team data to the front end,” explains Peter Fox, StreamAMG marketing manager. “We also host and serve on-demand video via our CloudMatrix content distribution system, and handle login and user management via CloudPay.”

Independent of StreamAMG, Pixelott already has its automatic production solution installed at ten clubs in Scottish Leagues One and Two and provides them with a ‘white label’, managed OTT solution. That came into its own at the start of the season when fans were unable to attend. However, the system is not without its glitches. League Two’s Albion Rovers v Ayr United in early October had fans unable to see the feed, while others complained the camera failed to show goals during Ayr’s 5-2 win.

The automated sports production market is rocketing and Israel-based Pixelott reckons it has the lion’s share. More than 100,000 hours of live sport were streamed last month using its technology. The bulk of this is in US education, where Pixelott is currently outfitting more than 20,000 systems in high schools in partnership with PlayOn! Sports.

The project is being paid for by syndicating content to third-party publishers such as Facebook, sponsorships and through a $10 monthly fee via PlayOn’s OTT platform. Given the massive pool of potential sports content across the country’s high schools, PlayOn’s goal is to produce more than one million live event broadcasts per year by 2025.

“The automatic production field is causing the sports industry to rethink its approach. Covid accelerated the automated content creation evolution,” concludes Tarablus.

Watching nature

Springwatch, Autumnwatch and Winterwatch are some of the BBC ’s biggest live productions. The shows feature live studio and on-location content of British wildlife and natural habitats across different parts of the country. Springwatch, which runs in June, is the series’ centrepiece, but when plans were being drawn up for this year’s broadcast, the UK was in the early stages of lockdown. There was discussion about pre-recording the show, but the producers were intent on finding a way to keep it live.

Robert Dawes, senior research engineer of BBC R&D, says: “We had to quickly change our system from one based on-site in the outside broadcast truck to one that operated remotely in the cloud.”

Springwatch is broadcast three days a week for three weeks and uses up to 30 camera feeds, many giving rarely seen views, like the inside of bird nests. Getting live feeds from a large number of locations is a regular technical challenge for the team in any year, but this year’s production also had to find a way of feeding the live presentation of its four remote presenters. Satellite links have been used in the past, but that proved too costly when each presenter was forced to broadcast from their own local region. In the event, two presenter links were satellite, and two were a mix of LiveU cellular and IP live streaming.

OB partner Timeline Television set up a gallery at the BBC’s Ealing Studios to receive all incoming links. This main control area housed the director, vision mixer and the script supervisor – all socially distanced. A sound supervisor and EVS operator worked in an adjacent area. The show’s producers worked from home and were able to view Timeline’s virtual gallery through remote access over IP.

Aside from cameras in nest boxes and hides, Springwatch cameras were joined by feeds from the likes of the Royal Society for the Protection of Birds (RSPB) and the Dorset Wildlife Trust, which were offered as an additional public stream. The production ramped up its use of computer vision to automate logging of these rushes, which would otherwise have added to the show’s already high shoot ratio.

We investigated how we could apply technology to perform tasks automatically

“With no extra staff or facilities available to monitor and record the partner cameras, we investigatedd how we could apply technology to perform tasks automatically,” says Dawes. “By doing this, we were able to extract video clips that were then used by the digital production team in their live shows.”

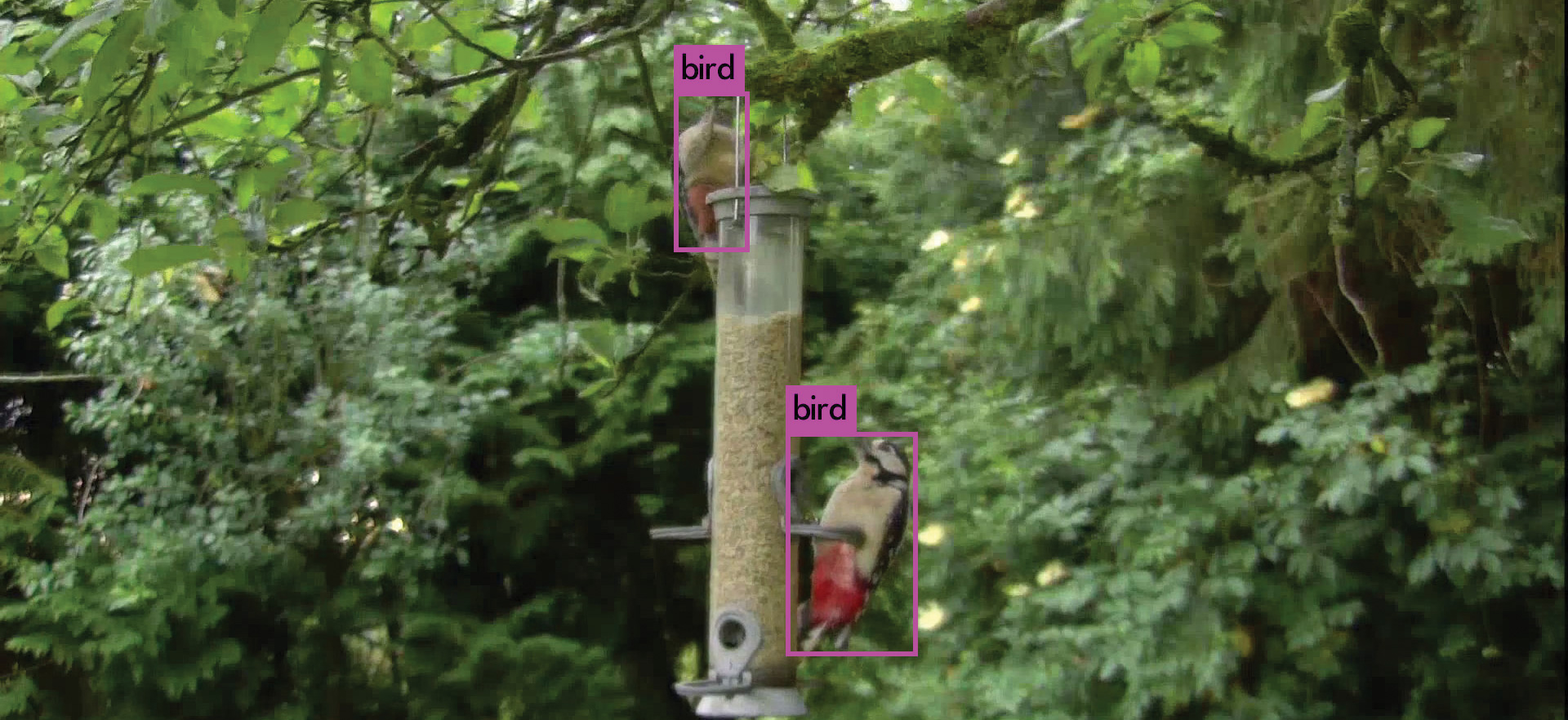

The resulting tool was based on the YOLO neural network and runs on the Darknet open-source machine-learning framework. It was able to automatically detect and locate animals in each scene using object recognition.

“We’ve trained a network to recognise birds and mammals, and it can run just fast enough to find and then track animals in real-time on live video,” Dawes says. “We store the data related to the timing and content of the events, and use this as the basis for a timeline we provide to members of the production team. They can use the timeline to navigate through the activity on a particular camera’s video.

“We also provide clipped-up videos of the event. One is a small preview to allow for easy reviewing, and a second is recorded at original quality with a few seconds’ extra video either side of the activity. This can be immediately downloaded to be viewed, shared and imported into an editing package.”

A similar workflow was used for Autumnwatch which aired in October, with more AI-production likely for Springwatch 2021.

This article first featured in the Winter 2020/21 issue of FEED magazine.